Experts state that duplicate content is often unintentional plagiarism and not meant to cause harm. Though, duplicate content can hamper the SEO rankings in the search engines, which in turn results in losing potential customers.

Duplicate content is usually not penalized algorithmically or manually, but copied content is. To maintain the search engine rankings and to make the content ‘unique,’ the website needs to incorporate high-quality techniques like SEO audit rather than the low-quality technique of using synonyms or paraphrasing.

If the concerned website contains republished content, the chances are high that search engines like that of Google, prefer other websites’ ‘value-added’ content over the republished content. Though unintentional, the republished content must be bugged with plagiarism.

The website content must be of ‘the high-quality ones,’ as that can only help to acquire organic listings in Google, Unintentional plagiarism can be readily avoided by inducing some plagiarism checker work at the stage of ongoing web designing.

How to Deal with Intentional and Unintentional Plagiarism?

Original content can scale up the SEO rank of a given website. There are some genuine plagiarism checkers available in the market that helps the website to deal with plagiarism in published work.

These plagiarism tools deal with duplicate content issues with the online text compare method. A one-stop destination to avoid plagiarism and handle the plagiarism issues is the application of duplicate content checker for SEO.

Prevent Plagiarism Through These Ways

Unique and original content must be valued by the content marketers and SEO professionals, who aim to enhance the site performance through SEO ranks. These are often unintentional plagiarism and not intended to affect other existing published works.

When the content is check for plagiarism before the publishing of the website content, the site performance is likely to be showing better results. Developers or SEO professionals must go for anti-plagiarism software on different stages of website development to hinder plagiarism in published work.

These paid and free tools are known to work semantically with the duplicated content by the process of online text compare. SEO audits conducted regularly also help in keeping a check to the site insights, multiple pages interlinks and site contents, and average traffic of the website.

Social media servers see duplicate content issues now and then. Tweets, blogs, comments— everywhere there is a tendency of content theft and that too in a most audacious way.

Preventing and handling these issues can be a gruesome task, which is reduced by the careful implementation of the online compare text method used by the plagiarism tools.

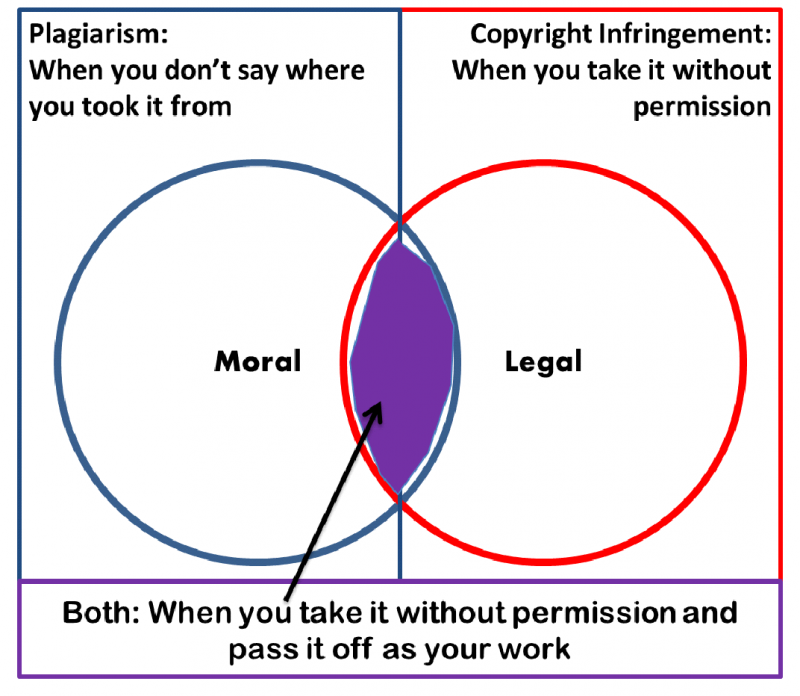

Copyright infringement

Writers and freelancers must incorporate copyright infringement for their content to prevent stealing by the content scrapers and spammers. The content scrapers steal the contents of other websites and blogs and use them as their own.

A copyright infringement report submitted to Google can prevent these so-called ‘thieves’ from duplicating or plagiarizing the website.

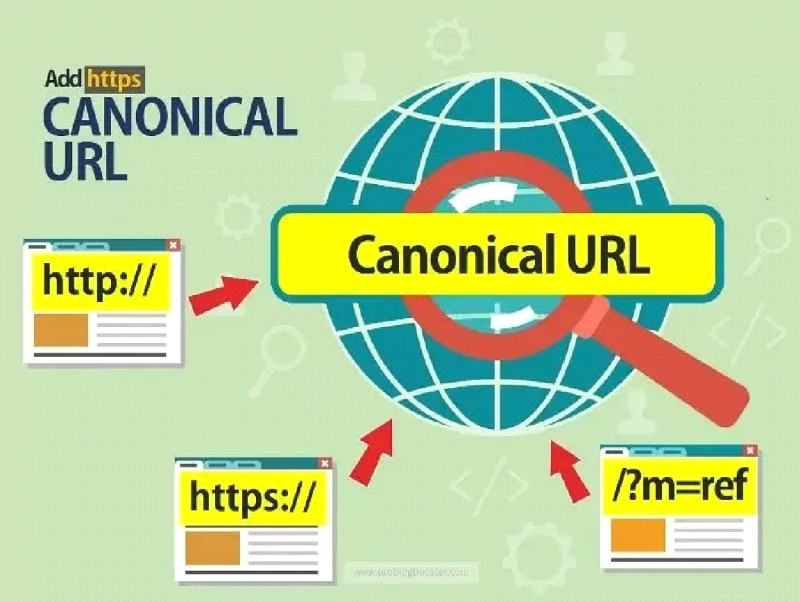

Canonical tags

Another way to neutralize the intentions of the ‘thieves’ is to use canonical tags. The web designers are suggested to use canonical tags to redirect the crawlers back to the original web page.

It is an effective way to prevent the copied content from stealing the search engine rankings and restoring the rankings to the original website.

Limiting article distribution

When the website requires building to a particular website, many times, the same content is used at different web pages. These are unintentional plagiarism but cause the original site performance to go down.

The solution to this issue is in limiting the number of content distribution. This way, the plagiarism caused can be restricted, helping the restoration of the search engine rankings.

Passing through online plagiarism checker

A general plagiarism generation is through product descriptions that are spread across web pages. Usually, the same description for a particular product is used across different web pages.

Using quality plagiarism detectors, plagiarism detecting tools that are avidly available online is a substantial solution to this problem. These plagiarism checkers work on the descriptions and guide through different pages of the description.

Generating internal links

Creating more internal links through different pages of the concerned product description, help the search engines to find the original content more easily.

Tagging for a single category

When multiple tagging is conducted for a single product category by the content management system, duplication of content becomes a general scenario. The particular issue can be handled by tagging the category for a single time rather than going for multiple tags.

Ensuring alternate versions of the URL

Redundant URLs can cause content duplication. The web designer must ensure alternate versions of the URLs that redirect to the canonical URL. It helps in the restoration of the rankings of the original URL.

Using a Noindex Meta tag

In the cases of A/B testing and ad landing pages, the scenario of the generation of multiple versions of a particular content arises. It calls for duplication of the content. Using of Noindex Meta tags, by the web designers, for every web page decreases the chances of duplicate content.